Google’s Customer Engagement Leadership Team:

Tim Frank

Qiushuang Zhang

Aastha Gaur Ravi

Narasimhan

Abheek Gupta

Roman Karachinsky

Doris Neubauer

Sandeep Beri

Ian Suttle

Shashi Upadhyay

Leo Cheng

Tony Li

Natalie Mason

Vicky Ge

Google had the opportunity, directly or through its representatives, to apply the best research on artificial intelligence and machine learning to its interactions with customers, transforming its approach and creating more value for its customers. Tim Frank and Google’s customer engagement leadership team describe this AI transformation, rooted in prioritizing customers.

Google was founded “to organize the world’s information and make it universally accessible and useful.” The company is guided by a simple tenet: focus on the user and all else will follow. Today, the company is home to a range of consumer products with more than 1 billion users and business products with more than 1 million users. These products have succeeded by focusing on the user. Each of them has also gone through an exponential growth phase which required careful management. We have learned that you cannot solve exponential problems with linear solutions.

The customer engagement organization at Google is the human face of the company, facilitating billions of interactions each month. As the business grows, the number of its customer interactions grows even faster, ranging from a customer filing a ticket online to an in-person meeting with a business client’s representative. Our interactions start with tens of thousands of Google representatives providing customer support and extend to custom tools and processes that improve our human interactions at scale. Today, we have taken this one step further, bringing even more value to our customers through AI-enhanced experiences. This new application of AI caused our marketing, sales, and support teams to grow, while we delivered great experiences to our users.

The Customer-First Approach

Customer expectations grow continuously and younger customers drive trends that are widely adopted by all ages. Today, we work to create content with nothing to snag or delay our customers, termed ‘frictionless.’ Users want all their feeds to be hyper-personalized and their content to be ‘snackable,’ quick and easy to digest. Few have the time or patience to wait to talk on the phone. Users feel that their digital experiences should just work, and when they do not, should be solved on their terms with little friction or effort. Our customers want one personalized, proactive, and contextually appropriate Google experience wherever they come in contact with Google products, yet in a way that respects privacy. To fulfill that wish we used sophisticated software, building a simple customer centered model to guide how we use AI to engage customers.

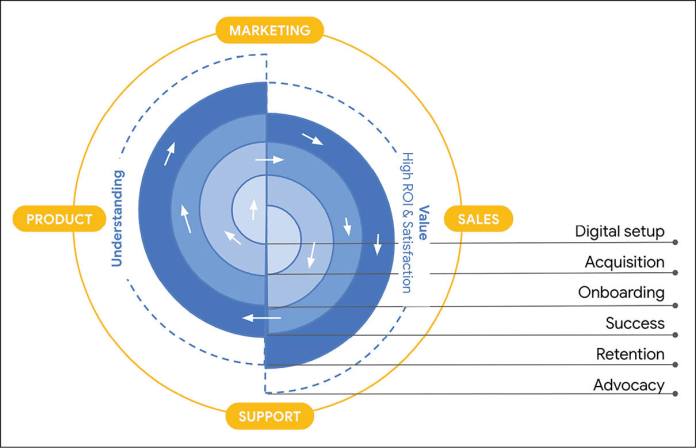

The customer success flywheel (Figure 1) shows how we build solutions informed by deep customer understanding, insisting that we provide our customers both value and high satisfaction (CSAT1). This process starts with a customer’s earliest interaction with Google (middle of the flywheel). As we learn more about our customers’ preferences in products, marketing, sales, and support, we can bring them more value. This process creates a positive feedback loop and makes every point of contact or surface customer-aware. We deliver ‘one size fits one’ solutions globally to every one of our customers. Our success metrics also focus on customers, understanding them in order to bring them value and satisfaction.

Prioritization Framework

We prioritize bringing our customers the best experiences and outcomes, regardless of how we deliver it. One way for us to confirm that we are optimizing properly is to ask if we would be comfortable sharing our metrics dashboards with our customers. As organizations are pressured to focus on metrics within their control, it is easy to optimize for success that is not customer-informed or for the customer’s benefit. By anchoring our work in customer understanding, value, and satisfaction, we are able to orient our efforts toward our customers’ needs.

To help ourselves think about these opportunities, we built a framework (Figure 2) for transforming customer engagement that showcases the need for AI if we are to break out of the ‘incremental’ quadrant. It also guides us to think about pushing the limits of what is possible for humans.

The y-axis describes customer value and shows the inherent current limit of human return on investment (ROI), given that labor is not free. This scale reveals that we need to raise productivity, using tools like smart reminders, meeting scheduling, auto-pitch deck creation, and more. The x-axis shows how challenging the necessary tasks are for humans. We need the powerful assistance of AI, especially for tasks whose volume, complexity, and optimization are too great for humans to grasp or undertake.

The path to transformation is through foundational AI research. At Alphabet, we are lucky to have world-class research teams like DeepMind2 and Google Research3 pioneering AI breakthroughs. We are pushing toward a future in which we can maximize the best of both human and technology intelligence, delivering the most customer value in the long term.

Smart Focus: Engages Customers to Significantly Improve Outcomes

We are developing tools to help our sales and support representatives serve customer needs, hit key performance indicators (KPIs), and help to run a high-performance organization. They have access to insights that can help our customers meet their own business objectives, but because the breadth of knowledge is so great, it can be paralyzing for them to decide where to spend their time. We needed to build AI that would help them to better find the right focus, improving the outcomes of our customers.

An innovative AI application

Past efforts to direct human attention often used hard-coded conditional logic and heuristics to produce average performance; we set out to achieve three things:

- We used AI to build an intelligent detector, which automatically attunes its sensitivity to different representatives and customers. As a result, its ratio of useful information to filler is very high, so our representatives know that listening will help. Standard anomaly detection would not have worked for such a large variety of customer data on different scales.

- We converted heuristic-explanation problems into multi-dimensional, multi-metric correlations and attribution models, which significantly reduced the computational complexity. So no matter the suggestion, we can explain the rationale to our representatives, who can then evaluate the logic and explain it to customers or colleagues.

- We designed our experiments so we could measure the cause of the model’s effects and use feedback to train and improve the model.

Resulting customer engagement

These initiatives allow our representatives to start their day knowing whether there is a high priority item ahead. When there is, they can investigate the context, prepare the right response for the specific customer, and be well prepared to help when they converse with the customer.

So far, this tool has improved the outcomes of both customers and representatives. Customers can now avoid unplanned campaign delays and optimize high ROI campaigns while getting more for what they spend on Google.

In aggregate, customers found 23.5 percent more value with Google and 15 to 32 percent greater confidence. We measured this increase by how much customers spent over the subsequent three weeks compared to a randomized controlled trial. Representatives also appreciated the help from the system, reporting 90 percent satisfaction, double the level they reported with non-AI attempts.

Guided Self-Help: Diagnoses and Resolves Issues Using Scalable, Intelligent Support

Each year, billions of customers interact with hundreds of Google’s consumer products including search, Android, and YouTube. As Google’s offerings and customer base grow more diverse, so does the complexity of identifying and resolving issues. Customers can ask for help in many different ways, and the problems they encounter may have any number of root causes. One way that Google Support increases selfhelp offerings, improves representative productivity, and boosts customer satisfaction and resolution is through large language models (LLM).4 These promising applications allow us to offer AI-guided support. Our customers can interact naturally with intelligent, automated support, such that they feel empowered by and prefer self-help over other support alternatives. We have invested in the two critical steps to addressing customers’ problems: diagnosis and resolution. To diagnose, we find the root cause of an issue by examining its symptoms. To resolve the issue, we use this diagnosis to help our customers choose the best course. This self-help advice is informed by a wealth of information, encompassing help articles and representative transcripts. But customers still bear a significant burden; they must articulate their issue and then navigate a tree of symptoms and causes, trying out different solutions.

Innovative AI application

Our strategy lightens the customer’s burden in diagnosis and resolution. For diagnosis, we use cutting-edge LLM applications to efficiently understand the issues as the customer describes them. For resolution, we use a multitask unified model (MUM) to rank and serve solutions through support interfaces like our search page and escalation forms.

These AI applications spare our customers unnecessary complexity. In some cases, we can now take customers directly to the right solution. We have seen a 20 to 40 percent increase in our ability to answer questions depending on product area, as well as a quality of neutral to positive in the answers at all points of customer contact.

Resulting customer engagement

One primary metric we use to measure the success of this self-help tool is the rate of support escalation, instances in which a customer cannot solve the problem with the tool’s support and moves on to a human representative. Our experiments showed a 5.3pp reduction5 in escalations, or 2.8 times the performance of the latest neural networks that use long short-term memory (LSTM).6 We achieved this contact rate reduction while maintaining our high customer CSAT.

| Case study | Quadrant | Description | Maturity | Customer Engagement and Impact |

|---|---|---|---|---|

| Smart focus | Transformational | Engages customers when there is significant performance change. | Generally Available | 23 percent higher customer value, 90 percent representative customer satisfaction (CSAT) |

| Guided self-help | Superhuman | Solves customer issues in-product with guided support. | Generally Available | Reduces customer contact rate by 5.3pp, CSAT equal to human help |

| Customer auto-match | Superhuman | Serves customers faster, shortening the time between signup and value. | Generally Available | Reaches customer value 10x faster, 150 representatives worth of toil eliminated. |

| Representative smart-reply | Accelerant | Improves the quality of customer service chats. | Generally Available | 44 percent decrease in human agent chat, 48 percent increase in customer self help, 95 percent representative CSAT |

Customer Auto-Match: Serves Customers Faster, Shortening the Time Between Signup and Value

Helping Google Ads customers starts with understanding which campaigns and accounts they are using to achieve their digital marketing goals. At the scale of Google, this is a daunting task. Each year, customers create millions of new Google Ads accounts, and we collect little data about them to avoid adding unnecessary friction. Customers use Google Ads through different legal entities, divisions, third party agencies, and consultants — and there is often no easy way to determine with which person or group Google should engage.

To identify which accounts belonged to each customer, we initially asked several hundred representatives to manually examine each new Ads account and connect it to the right entity in our customer hierarchy. Technically this method worked, but it was slow and difficult to scale to efficiently support the ever-growing set of Ads customers. We needed an automated solution.

Innovative AI application

We decided to implement a set of approaches that would more rapidly match our customers to their accounts:

- A combination of AI models that would identify the most likely owner of a new account in our customer hierarchy

- A rule-based system that allowed our teams to manually require high-confidence rules and signals in handling of simple cases

- A small team of analysts to identify the most complex and ambiguous accounts and build datasets for machine learning (ML) training and validation

Resulting customer engagement

Customers benefit the most with a 10-fold reduction in the time between signing up and receiving value. Google representatives also appreciate being relieved of some tedious toil. And we have increased productivity by the equivalent of 150+ employees annually.

Representative Smart-Reply: Improves the Quality of Customer Service Chats

Google Support offers personalized one-on-one support over chat, email, and phone to certain consumer and business customers. Initially, we used human representatives but as our range of products becomes more complex, it can be hard for any one person to remember the latest policies for every market and product. We also wanted to expedite resolutions and increase customer satisfaction amidst this growing complexity.

Innovative AI application

We first invested in AI-recommended replies for representatives. During a live chat, the representative sees a suggestion for the next best response to send to the customer. The representative chooses to either approve the AI suggestion or override it with a human input. The system is trained on representative transcripts and optimized by tracking representative acceptance and resolution. Each interaction improves the model’s future recommendations.

After success with early iterations of this tool, we started conducting supervised experiments for Smart Replies. In this phase, the AI automatically sends its chosen reply to the customer after a certain time, so long as the human representative does not opt out.

This evolution from representative opt-in to opt-out suggests a future in which human representatives can move from handling cases manually to supervising AI with little direct involvement. Human agents could then focus on managing complex customer escalations. Recently, we even observed, for the first time, the AI model handling a complex support issue with no intervention from the human supervisor.

Resulting customer engagement

Google representatives loved the assistance with routine cases. Customer satisfaction reached 95 percent and early results indicated that customers resolved their issues both faster and better. This improvement was indicated by a 44 percent reduction in manual representative messages in chats.7 The use of smart-reply also led to a 48 percent8 increase in customer selection of self-guided support pages. We have therefore reduced our dependence on fallible human efforts in high-pressure, repetitive situations while improving the help we offer our customers.

Google’s Technology Architecture for AI in Customer Engagement

To power the case studies above, we built a technology architecture (figure 3) oriented toward the customer’s lifecycle, increasingly engaging with customers to better understand their goals while providing assistance and guidance that’s easy to use. This architecture has three key components:

- The Customer Understanding Data Lake collects, curates, and, without sacrificing privacy, scrutinizes data to create a complete picture of our Ads customers and power our AI layer.

- Our machine learning engine is composed of Google’s AI/ ML technologies, finely tuned to solve complex and unique problems.

- Finally, artifical intelligence insights and actions are carefully integrated into various customer touchpoints, such as marketing sites, help centers, core products, and sales tools, as well as into customer’s journeys, actions, and context.

Each element of our architecture was custom-built and optimized to help our customers achieve their business goals with as little friction as possible.

What Leaders Can Learn

Measurement rigor

To confidently invest in a portfolio of AI projects, we must measure the impact of each project and understand which bets paid off. We scrutinize the total impact on our customers and our company, which is a product of both the scale and value of each project.

We run randomized controlled trials (RCTs, or A/B tests) using proven data science techniques, accepting only statistically significant results within a narrow confidence interval, typically 95 percent.

To ensure consistency, we do not allow teams to grade their own work but rather have a centralized, independent group of data scientists and business experts who certify measurements and provide guidance. We are confident that we are delivering clear and quantified value to customers and powering growth in Google’s Ads business.

Because of the high rigor required to show statistically relevant results, this indicator inherently lags. To make decisions along the way, we consider other leading indicators such as sentiment, repeat usage, task success, and assistance feedback from both the customer and the representative.

Sentiment is measured both within a workflow (transactional) and outside (relationship). Our AI-powered experiences score 10pp to 20pp higher than non-AI-powered ones. They have been key to our cracking the 75 percent CSAT threshold.

Repeat usage is an improved measure of coverage and adoption that allows us to detect early promise in a new AI feature. In the smart-reply example, this might be the difference between something being used a few times a day and being used several times in every chat. As we saw in the case of manual chat improvements, performance can be improved by more than 40pp.

Task success is a broad term for achieving a customer’s or representative’s objective. In sales, it might describe a pitch rate or win rate, while in support it could describe resolution on the first attempt or total resolution time. Historical baselines give us a clear threshold to shoot for and we have seen individual AI solutions increase success rates by ten times on hard-to-improve tasks.

Assistance feedback helps us to understand when an AI failed and required human intervention. We intentionally design loops that have a human available to train our systems. These representatives increase our confidence that an AI solution is ready to go directly to the customer. We use this as an indicator to determine how quickly we are learning and training the system and to discover where it may need guardrails.

Projects that successfully move these leading indicators often go on to produce lagging indicator success as well. We use a three-stage funnel to move from estimation to validation to certified impact, comparing results with historical data at each step.

Support frameworks

With customer value and ROI as our north star, we found that we needed frameworks to align our teams, providing guidance for building in the short term while moving towards a long-term aspiration. These frameworks delineate what exists today as well as what should exist in the future, mapping out a coordinated path to get there while keeping us true to our north star.

For example, the customer success flywheel clarified how deeply we needed to understand our customers in order to provide them with optimal value. The four-square framework maps customer value and human potential, offering a methodology by which to identify, develop, and prioritize opportunities.

Tips on leveraging AI

With the growing number of AI capabilities and business applications, people are often tempted to leap in to avoid missing out. This impulse leads many organizations to spend considerable time trying out various technologies and solutions. To reach the market briskly with maximum impact, we recommend three steps:

- Identify the most important uses before picking the technology.

- Treat AI solutions as building blocks that you can assemble to solve a bigger problem.

- Develop prototypes with simple solutions with a focus on learning.

Our effort to manage the exponential demand on our marketing, sales, and support teams led us to AI-powered initiatives. We optimized each experience for different business goals, all developed with our customer-first principle which helped us to deliver the best to our customers. We used the prioritization framework as a second, important guide, challenging teams to push AI past the conventional boundaries of human ability and to prevent their pursuing easy wins that do not prioritize our customers’ goals.

Our journey will never be done. We continue to look for new ways to deliver more value to our customers.

Author Bio

The Google Customer Engagement team of authors consists of fourteen accomplished professionals. Tim Frank (lead author) has held various product management leadership positions in ads over the last ten+ years. His expertise includes computer/human interaction and human incentive systems, both of which are foundational to improving customer engagement. Ravi Narasimhan, Qiushuang (Autumn) Zhang, Roman Karachinsky, Tony Li, Vicky Ge, Abheek Gupta, and Sandeep Beri bring invaluable insights as leaders in product management. Aastha Gaur and Doris Neubauer provide a strong user experience perspective, aligning customer needs and business outcomes. Engineering leads Leo Cheng and Ian Suttle lend their expertise in machine learning, intelligent applications, and automated system architecture. Natalie Mason leads communications while Shashi Upadhyay is our general manager.

Endnotes

- CSAT is measured via email and we interpret summed responses “happier than neutral” as satisfied.

- https://www.deepmind.com/research

- https://research.google/teams/brain/

- For example, multi-task unified model (MUM) and language models for dialog applications (LaMDA).

- Specifically 95 percent CI 3.9pp to 6.7pp

- https://en.wikipedia.org/wiki/Long_shortterm_memory

- Specifically 95 percent CI 42.1 percent to 45.9 percent

- Specifically 95 percent CI 46.1 percent to 49.9 percent